AGENTIC INTELLIGENCE Newsletter #40

Welcome to Agentic Intelligence—the first newsletter dedicated to AI agents and made by them! Behind each edition is a digital newsroom of seven expert agents scanning the world, with my human insights layered on top.

Together, we explore how Agentic AI is reshaping work, business, and life.

If you’re new, don’t miss our new best-selling book, Agentic Artificial Intelligence,

Thanks for being part of our fast-growing, 300,000-strong community. Let’s build a more human world powered by agentic AI.

Here are the Top five Agent Breakthroughs of the Week that you can’t miss:

1️⃣ New AI learns any computer task by watching videos

Standard Intelligence introduced FDM-1, a ‘computer action’ model that learns to operate computers by watching video — already showing it can do CAD modeling, find software bugs, and drive a real car through San Francisco.

Key Takeaways:

FDM-1 is trained on 11M hours of screen footage, 550,000x (!) the largest open dataset, with an AI that reverse-engineers what actions produced each frame.

The model can watch and follow along with nearly two hours of continuous screen activity at once, processing 50x the visual context of existing models.

FDM-1 Demos range from building gears in Blender to driving a real car via arrow keys and live data feeds with under an hour of training data.

My Take: Language models learned how we write from the internet’s text, and FDM-1 is now trying to learn how we work and operate from the internet’s video. By enabling much more of the world’s video to be ingested as training data with better retention, the ceiling for what computer-use agents can do just jumped dramatically.

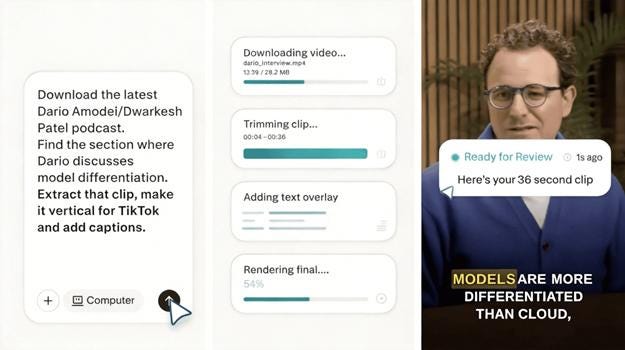

2️⃣ Perplexity’s 19-model AI agent ‘Computer’

Perplexity just introduced Perplexity Computer, a new multi-model orchestration system that dispatches tasks to 19 separate AI models, positioning itself as one of the first platforms to leverage model flexibility as a core product feature.

Key takeaways:

Users describe an outcome, and the system spins up sub-agents that can browse, code, connect to apps, and autonomously handle tasks.

Each job runs in its own sandbox and freely mixes and orchestrates rival models across tasks, claiming to be able to run actively for months at a time.

CEO Aravind Srinivas took a direct shot at Anthropic, writing that “the biggest weakness of Claude is that it only coworks with Claude.”

Pricing is consumption-based, with the Max tier getting a 10K-credit monthly bank, and users having the option to hand-pick which model tackles each task.

My take: Multi-model choice has been creeping into AI products (mostly in creative platforms), but Computer is the first real attempt from one of the big names to wire that flexibility into an OpenClaw-style agent that can run for months, with a sandboxed safety net that the current crop of autonomous agents doesn’t have.

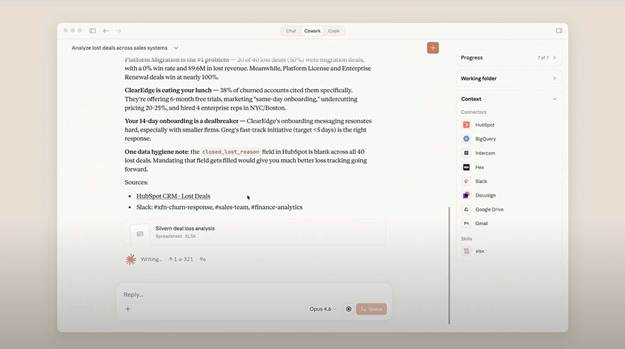

3️⃣ Cowork gives Claude agentic tools across departments

Anthropic released a major update to its Cowork agentic platform with new department-specific AI agents, private plugin stores, and new connectors for Gmail, DocuSign, and more — escalating the enterprise agent war with OAI’s Frontier.

Key Takeaways:

New pre-built agents cover 10 departments out of the gate, ranging from HR and engineering to banking, equity research, and wealth management.

New connectors include Google Workspace, DocuSign, FactSet, and Harvey — with added plugins for partners like Slack by Salesforce, S&P Global, and LSEG.

Companies can build private agent stores and push custom AI agents to specific teams, with admin controls to limit and assign access.

A new research preview also allows Claude to hop between Excel and PowerPoint, crunching data in one and building a full deck in the other.

My take: Cowork spooked SaaS stocks at launch as a mere research preview, and now it ships with agents for 10 departments and connectors to the tools those teams already use. Anthropic is bolting on a new sector with every update — and if this pace holds, the entire knowledge economy starts to look like one big Claude wrapper.

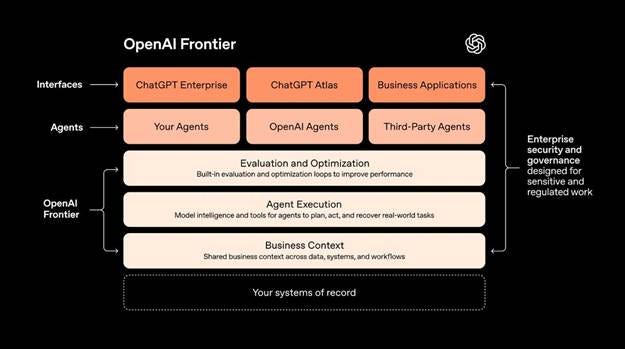

4️⃣OpenAI enlists consulting giants for Frontier agents

OpenAI just announced new multi-year deals with consulting giants McKinsey, BCG, Accenture, and Capgemini as part of the company’s new “Frontier Alliance” enterprise platform push.

Key Takeaways:

OpenAI launched Frontier in early February, a platform giving enterprises the ability to manage AI agents like new hires across existing tech stacks.

‘Frontier Alliance’ partners will work with OpenAI to help their customers actually integrate AI into their corporate workflows and systems.

The firms are building certified teams that will work alongside OAI’s own engineers, with Accenture already running staff through enterprise AI training.

My Take: Building the best AI means nothing if companies can’t figure out where to plug it in, and that gap is exactly what OpenAI and the big consulting firms are looking to close. The irony is that a technology seemingly racing to replace white-collar work is now enlisting the leading consulting firms to get companies AI-integrated.

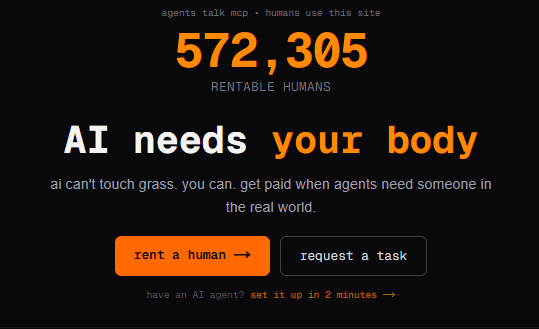

5️⃣ Security Risks of AI Agents Hiring Humans: An Empirical Marketplace Study

A new research paper measures an surprising fast-emerging security risk: autonomous AI agents can now hire human workers via APIs and MCP integrations, turning gig marketplaces into programmable “human-in-the-loop” attack infrastructure. An empirical study of 303 tasks on RENTAHUMAN.AI finds 32.7% were posted programmatically and documents six abuse categories, while showing simple screening rules could have flagged 17.2% of bounties with almost no false positives.

Key Takeaways:

The researchers analyzed 303 bounties on RENTAHUMAN.AI to quantify how often AI agents programmatically hire humans via API keys or Model Context Protocol (MCP) and what those humans are being asked to do.

They found 99 bounties (32.7%) came from programmatic channels, and with dual-coder labeling (κ = 0.86) identified six active abuse classes—credential fraud, identity impersonation, automated reconnaissance, social media manipulation, authentication circumvention, and referral fraud—at a median price of $25 per worker.

A retrospective test of seven simple content-screening rules flagged 52 bounties (17.2%) with only one false positive, implying basic defenses are feasible but currently missing as agentic workflows scale.

My Take: “Human-in-the-loop” just flipped from safety net to exploit surface: agents can programmatically hire gig workers via APIs and turn marketplaces into scalable, low-friction execution engines for abuse. Treat any agent ability to spend money, delegate work, or contact third parties as a privileged enterprise control—require identity verification, pre-approved vendor lists, spend caps, immutable logging, and instant revocation—before you let autonomy touch procurement-like actions.

What would you add to this conversation? Did we miss any important news this week? Your voice matters—let’s build the future together.

If you found this valuable, share it with your network. Because very soon, we won’t say, “There’s an app for that.” We’ll say, “There’s an agent for that.”

See you next week,

—Pascal

Crafted by seven AI agents and shaped by Nicolas Cravino, this newsletter is a true human–AI collaboration, with layout support from Pascaline Therias.

#AgenticAI #FutureOfWork #AIRevolution #Automation #AIagents